AI through the ages

Loading...

The concept of artificial intelligence is as old as the ancient Greeks and as new as the robotic rover currently probing the pinkish terrain of Mars, 352 million miles from Earth. In between have come "reasoning calculators," "analytical engines," and Leonardo da Vinci's walking lion. Modern AI began with the advent of the computer.

3500 BC

Greek myths of Hephaestus, the god of blacksmiths and artisans, include the notion of intelligent robots.

400 BC

Greek philosopher and mathematician Archytas builds a wooden pigeon whose movements are controlled by steam.

13th century

Spanish mystic and theologian Ramon Llull invents a mechanical device that tries to prove the veracity of ideas.

16th century

Clock makers use their skills to create machine-like animals. German Hans Bullmann crafts the first androids, mechanical figures that simulate people by playing musical instruments.

17th century

French mathematician and philosopher Blaise Pascal invents a mechanical calculating machine. Thomas Hobbes, in his book "Leviathan," suggests that man will create a new intelligence.

18th century

Mechanical toys proliferate, epitomized by this automaton doll and French inventor Jacques de Vaucanson's automated duck, which could flap its wings.

19th century

French weaver Joseph Marie Jacquard, using punch cards, creates a programmable loom. Charles Babbage, an English mathematician and engineer, builds an "analytical engine," a programmable calculating machine.

1921

Czech author Karel Capek introduces the word "robot" in a play about machines replacing human workers.

1943

British scientists build Colossus, the world's first electronic computer, to crack German codes.

1945

ENIAC (Electronic Numerical Integrator and Calculator), the first general-purpose computer, is created at the University of Pennsylvania for the US Army.

1948-49

British scientist William Grey Walter constructs two autonomous robots – turtles – that mimic lifelike behavior.

1950

British mathematician Alan Turing devises a test to recognize machine intelligence.

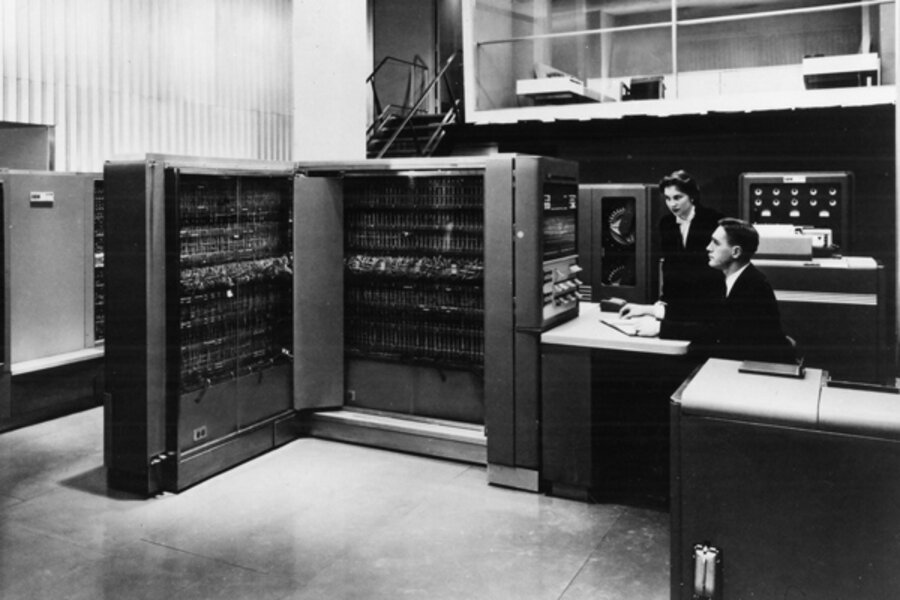

1953

IBM debuts the first commercially successful general-purpose computer.

1956

Computer scientist John McCarthy coins the term "artificial intelligence."

1962

Unimation, a US company, sells the world's first industrial robots.

1966-72

The Stanford Research Institute creates the first mobile robot, Shakey, which can maneuver around simple obstacles.

1968

Stanley Kubrick's movie "2001: A Space Odyssey" comes out, highlighting the dark side of computer intelligence.

1973

Scientists at Edinburgh University in Scotland create Freddy, a robot capable of using vision to locate and assemble parts.

1979

A computer program developed at Carnegie Mellon University in Pittsburgh defeats a world champion backgammon player – the first triumph of machine over man in a board game.

1980s

Expert systems, software programs that mimic the knowledge and decision-making ability of human specialists, spread widely.

1990s

AI advances on many fronts, from speech recognition to intelligent tutoring to investment decision-making to information management.

1997

IBM's Deep Blue supercomputer defeats world chess champion Garry Kasparov.

2000s

Interactive robot pets, called "smart toys," go on sale. Autonomous and talking robots are developed. The UN estimates in 2000 that close to 750,000 industrial robots are in use worldwide.

2011

IBM's Watson supercomputer beats the best human contestants on "Jeopardy!"

2012

The Mars rover Curiosity, bristling with lasers, sensors, and chemical analyzers, begins its exploration of the Red Planet.