How artificial intelligence is changing our lives

Loading...

| Bedford, Mass.

In Silicon Valley, Nikolas Janin rises for his 40-minute commute to work just like everyone else. The shop manager and fleet technician at Google gets dressed and heads out to his Lexus RX 450h for the trip on California's clotted freeways. That's when his chauffeur – the car – takes over. One of Google's self-driving vehicles, Mr. Janin's ride is equipped with sophisticated artificial intelligence technology that allows him to sit as a passenger in the driver's seat.

At iRobot Corporation in Bedford, Mass., a visitor watches as a five-foot-tall Ava robot independently navigates down a hallway, carefully avoiding obstacles – including people. Its first real job, expected later this year, will be as a telemedicine robot, allowing a specialist thousands of miles away to visit patients' hospital rooms via a video screen mounted as its "head." When the physician is ready to visit another patient, he taps the new location on a computer map: Ava finds its own way to the next room, including using the elevator.

In Pullman, Wash., researchers at Washington State University are fitting "smart" homes with sensors that automatically adjust the lighting needed in rooms and monitor and interpret all the movements and actions of its occupants, down to how many hours they sleep and minutes they exercise. It may sound a bit like being under house arrest, but in fact boosters see such technology as a sort of benevolent nanny: Smart homes could help senior citizens, especially those facing physical and mental challenges, live independently longer.

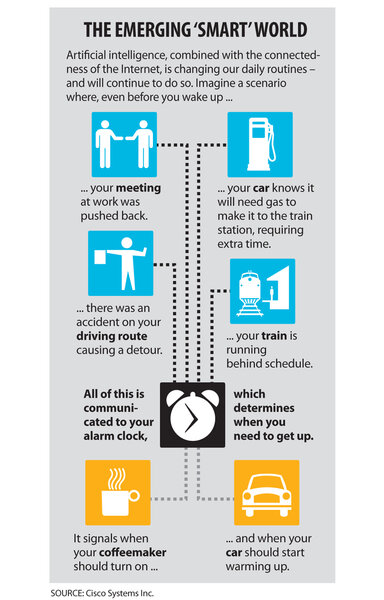

From the Curiosity space probe that landed on Mars this summer without human help, to the cars whose dashboards we can now talk to, to smart phones that are in effect our own concierges, so-called artificial intelligence is changing our lives – sometimes in ways that are obvious and visible, but often in subtle and invisible forms. AI is making Internet searches more nimble, translating texts from one language to another, and recommending a better route through traffic. It helps detect fraudulent patterns in credit-card searches and tells us when we've veered over the center line while driving.

Even your toaster is about to join the AI revolution. You'll put a bagel in it, take a picture with your smart phone, and the phone will send the toaster all the information it needs to brown it perfectly.

In a sense, AI has become almost mundanely ubiquitous, from the intelligent sensors that set the aperture and shutter speed in digital cameras, to the heat and humidity probes in dryers, to the automatic parking feature in cars. And more applications are tumbling out of labs and laptops by the hour.

"It's an exciting world," says Colin Angle, chairman and cofounder of iRobot, which has brought a number of smart products, including the Roomba vacuum cleaner, to consumers in the past decade.

What may be most surprising about AI today, in fact, is how little amazement it creates. Perhaps science-fiction stories with humanlike androids, from the charming Data ("Star Trek") to the obsequious C-3PO ("Star Wars") to the sinister Terminator, have raised unrealistic expectations. Or maybe human nature just doesn't stay amazed for long.

"Today's mind-popping, eye-popping technology in 18 months will be as blasé and old as a 1980 pair of double-knit trousers," says Paul Saffo, a futurist and managing director of foresight at Discern Analytics in San Francisco. "Our expectations are a moving target."

If Siri, the voice-recognition program in newer iPhones and seen in highly visible TV ads, had come out in 1980, "it would have been the most astonishing, breathtaking thing," he says. "But by the time Siri had come, we were so used to other things going on we said, 'Oh, yeah, no big deal.' Technology goes from magic to invisible-and-taken-for-granted in about two nanoseconds."

* * *

In one important sense, the quest for AI has been a colossal failure. The Turing test, proposed by British mathematician Alan Turing in 1950 as a way to verify machine intelligence, gauges whether a computer can fool a human into thinking another human is speaking during short conversation via text (in Turing's day by teletype, today by online chat). The test sets a low bar: The computer doesn't have to be able to really think like a human; it only has to seem to be human. Yet more than six decades later no AI program has passed Turing's test (though an effort this summer did come close).

The ability to create machine intelligence that mimics human thinking would be a tremendous scientific accomplishment, enabling humans to understand their own thought processes better. But even experts in the field won't promise when, or even if, this will happen.

"We're a long way from [humanlike AI], and we're not really on a track toward that because we don't understand enough about what makes people intelligent and how people solve problems," says Robert Lindsay, professor emeritus of psychology and computer science at the University of Michigan in Ann Arbor and author of "Understanding Understanding: Natural and Artificial Intelligence."

"The brain is such a great mystery," adds Patrick Winston, professor of artificial intelligence and computer science at the Massachusetts Institute of Technology (MIT) in Cambridge. "There's some engineering in there that we just don't understand."

Instead, in recent years the definition of AI has gradually broadened. "Ten years ago, if you asked me if Watson [the computer that defeated all human opponents on the quiz show "Jeopardy!"] was intelligent, I'd probably argue that it wasn't because it was missing something," Dr. Winston says. But now, he adds, "Watson certainly is intelligent. It's a certain kind of intelligence."

The idea that AI must mimic the thinking process of humans has dropped away. "Creating artificial intelligences that are like humans is, at the end of the day, paving the cow paths," Mr. Saffo argues. "It's using the new technology to imitate some old thing."

Entrepreneurs like iRobot's Mr. Angle aren't fussing over whether today's clever gadgets represent "true" AI, or worrying about when, or if, their robots will ever be self-aware. Starting with Roomba, which marks its 10th birthday this month, his company has produced a stream of practical robots that do "dull, dirty, or dangerous" jobs in the home or on the battlefield. These range from smart machines that clean floors and gutters to the thousands of PackBots and other robot models used by the US military for reconnaissance and bomb disposal.

While robots in particular seem to fascinate humans, especially if they are designed to look like us, they represent only one visible form of AI. Two other developments are poised to fundamentally change the way we use the technology: voice recognition and self-driving cars.

* * *

In the 1986 sci-fi film "Star Trek IV: The Voyage Home," the engineer of the 23rd century starship Enterprise, Scotty, tries to talk to a 20th-century computer.

Scotty: "Computer? Computer??"

He's handed a computer mouse and speaks into it.

Scotty: "Ah, hello Computer!"

Silence.

20th-century scientist: "Just use the keyboard."

Scotty: "A keyboard? How quaint!"

Computers that easily understand what we say, or perhaps watch our gestures and anticipate what we want, have long been a goal of AI. Siri, the AI-powered "personal assistant" built into newer iPhones, has gained wide attention for doing the best job yet, even though it's often as much mocked for what it doesn't understand as admired for what it does.

Apple's Siri – and other AI-infused voice-recognition software such as Google's voice search – is important not only for what it can do now, like make a phone call or schedule an appointment, but for what it portends. Siri might understand human conversation at the level of a kindergartner, but it still is magnitudes ahead of earlier voice-recognition programs.

"Siri is a big deal," says Saffo. It's a step toward "devices that we interact with in ever less formal ways. We're in an age where we're using the technology we have to create ever more empathetic devices. Soon it will become de rigueur for all applications to offer spoken interaction.... In fact, we consumers will be surprised and disappointed if or when they don't."

Siri is a first step toward the ultimate vision of a VPA (virtual personal assistant), say Norman Winarsky and Bill Mark, who teamed up to develop Siri at the research firm SRI International before the software was bought by Apple. "Siri required not just speech recognition, but also understanding of natural language, context, and ultimately, reasoning (itself the domain of most artificial intelligence research today).... We think we've only seen the tip of the iceberg," they wrote in an article on TechCrunch last spring.

In the near future, VPAs will become more useful, helping humans do tasks such as weigh health-care alternatives, plan a vacation, and buy clothes.

Or drive your car. Vehicles that pilot themselves and leave humans as passive passengers are already being road-tested. "I expect it to happen," says AI expert Mr. Lindsay. One advantage, he says, tongue in cheek: A vehicle driven by AI "won't get distracted by putting on its makeup."

While Google's Janin rides in a self-driving car, he doesn't talk on the phone, read his favorite blogs, or even sneak in a little catnap on the way to work – all tempting diversions. Instead, he analyzes and monitors the data derived from the car as it makes its way from his home in Santa Clara to Google's headquarters in Mountain View. "Since the car is driving for me, though, I have this relaxed, stress-free feeling about being in stop-and-go traffic," he says. "Time just seems to go by faster."

Cars that drive themselves, once the stuff of science fiction, may be in garages relatively soon. A report by the consulting firm KPMG and the Center for Automotive Research, a nonprofit group in Michigan, predicts that autonomous cars will make their debut by 2019.

Google's self-driving cars, a fleet of about a dozen, are the most widely known. But many big automotive manufac-turers, including Ford, Audi, Honda, and Toyota, are also investing heavily in autonomous vehicles.

At Google, the vehicles are fitted with a complex system of scanners, radars, lasers, GPS devices, cameras, and software. Before a test run, a person must manually drive the desired route and create a detailed map of the road's lanes, traffic signals, and other objects. The information is then downloaded into the vehicle's integrated software. When the car is switched to auto drive, the equipment monitors the roadway and sends the data back to the computer. The software makes the necessary speed and steering adjustments. Drivers can always take over if necessary; but in the nearly two years since the program was launched, the cars have logged more than 300,000 miles without an incident.

While it remains uncertain how quickly the public will embrace self-driving vehicles – what happens when one does malfunction? – the authors of the KPMG report make a strong case for them. They cite reduced commute times, increased productivity, and, most important, fewer accidents.

Speaking at the Mobile World Congress in Barcelona, Spain, earlier this year, Bill Ford, chairman of Ford Motor Company, argued that vehicles equipped with artificial intelligence are critically important. "If we do nothing, we face the prospect of 'global gridlock,' a never-ending traffic jam that wastes time, energy, and resources, and even compromises the flow of commerce and health care," he said.

Indeed, a recent study by Patcharinee Tientrakool of Columbia University in New York estimates that self-driving vehicles – ones that not only manage their own speed but commuicate intelligently with each other – could increase our highway capacity by 273 percent.

The challenges that remain are substantial. An autonomous vehicle must be able to think and react as a human driver would. For example, when a person is behind the wheel and a ball rolls into the road, humans deduce that a child is likely nearby and that they must slow down. Right now AI does not provide that type of inferential thinking, according to the report.

But the technology is getting closer. New models already on the market are equipped with technology designed to assist with driving duties not found just a few years ago – including self-parallel parking, lane-drift warning signals, and cruise control adjustments.

Lawmakers are grappling with the new technology, too. Earlier this year the state of Nevada issued the first license for autonomous vehicles in the United States, while the California Legislature recently approved allowing the eventual testing of the vehicles on public roads. Florida is considering similar legislation.

"It's hard to say precisely when most people will be able to use self-driving cars," says Janin, who gets a "thumbs up" from a lot of people who recognize the car. "But it's exciting to know that this is clearly the direction that the technology and the industry are headed."

* * *

At first glance, the student apartment at Washington State University (WSU) in Pullman appears just like any other college housing: sparse furnishings, a laptop askew on the couch, a television and DVD player in the corner, a "student survival guide" sitting out stuffed with coupons for everything from haircuts to pizza.

But a closer examination reveals some unusual additions. The light switch on the wall adjoining the kitchen glows blue and white. Special sensors are affixed to the refrigerator, the cupboard doors, and the microwave. A water-flow gauge sits under the sink.

All are part of the CASAS Smart Home project at WSU, which is tapping AI technology to make the house operate more efficiently and improve the lives of its occupants, in this case several graduate students. The project began in 2006 under the direction of Diane Cook, a professor in The School of Electrical Engineering and Computer Science.

A smart home set up by the WSU team might have 40 to 50 motion or heat sensors. No cameras or microphones are used, unlike some other projects across the country.

The motion sensors allow researchers to know where someone is in the home. They gather intelligence about the dwellers' habits. Once the system becomes familiar with an individual's movements, it can determine whether certain activities have happened or not, like the taking of medication or exercising. Knowing the time of day and what the person typically does "is usually enough to distinguish what [the person is] doing right now," says Dr. Cook.

A main focus of the WSU research is senior living. With the aging of baby boomers becoming an impending crisis for the health-care industry, Cook is searching for a way to allow older adults – especially those with dementia or mild impairments – to live independently for longer periods while decreasing the burden on caregivers. A large assisted-care facility in Seattle is now conducting smart-home technology research in 20 apartments for older individuals. A smart home could also monitor movements for clues about people's general health.

"If we're able to develop technology that is very unobtrusive and can monitor people continuously, we may be able to pick up on changes the person may not even recognize," says Maureen Schmitter-Edgecombe, a professor in the WSU psychology department who is helping with the research.

Sensors seemed poised to become omnipresent. In a glimpse of the future, an entire smart city is being built outside Seoul, South Korea. Scheduled to be completed in 2017, Songdo will bristle with sensors that regulate everything from water and energy use to waste disposal – and even guide vehicle traffic in the planned city of 65,000.

While for many people such extensive monitoring might engender an uncomfortable feeling of Big Brother, AI-imbued robots or other devices also may prove to be valuable and (seemingly) compassionate companions, especially for seniors. People already form emotional attachments to AI-infused devices.

"We love our computers; we love our phones. We are getting that feeling we get from another person," said Apple cofounder Steve Wozniak at a forum last month in Palo Alto, Calif.

The new movie "Robot & Frank," which takes place in the near future, depicts a senior citizen who is given a robot and rejects it at first. But "bit by bit the two affect each other in unforeseen ways," notes a review at Filmjournal.com. "Not since Butch Cassidy and the Sundance Kid has male bonding had such a meaningful but comic connection.... [P]erfect partnership is the movie's heart."

* * *

Not everything about AI may yield happy consequences. Besides spurring concerns about invasion of privacy, AI looks poised to eliminate large numbers of jobs for humans, especially those that require a limited set of skills. One joke notes that "a modern textile mill employs only a man and a dog – the man to feed the dog, and the dog to keep the man away from the machines," as an article earlier this year in The Atlantic magazine put it.

"This so-called jobless recovery that we're in the middle of is the consequence of increased machine intelligence, not so much taking away jobs that exist today but creating companies that never have jobs to begin with," futurist Saffo says. Facebook grew to be a multibillion-dollar company, but with only a handful of employees in comparison with earlier companies of similar market value, he points out.

Another futurist, Thomas Frey, predicts that more than 2 billion jobs will disappear by 2030 – though he adds that new technologies will also create many new jobs for those who are qualified to do them.

Analysts have already noted a "hollowing out" of the workforce. Demand remains strong for highly skilled and highly educated workers and for those in lower-level service jobs like cooks, beauticians, home care aides, or security guards. But robots continue to replace workers on the factory floor. Amazon recently bought Kiva Systems, which uses robots to move goods around warehouses, greatly reducing the need for human employees.

AI is creeping into the world of knowledge workers, too. "The AI revolution is doing to white-collar jobs what robotics did to blue-collar jobs," say Erik Brynjolfsson and Andrew McAfee, authors of the "Race Against the Machine."

Lawyers can use smart programs instead of assistants to research case law. Forbes magazine uses an AI program called Narrative Science, rather than reporters, to write stories about corporate profits. Tax preparation software and online travel sites take work previously done by humans. Businesses from banks to airlines to cable TV companies have put the bulk of their customer service work in the hands of automated kiosks or voice-recognition systems.

"While we're waiting for machines [to be] intelligent enough to carry on a long and convincing conversation with us, the machines are [already] intelligent enough to eliminate or preclude human jobs," Saffo says.

* * *

The best argument that AI has a bright future may be made by fully acknowledging just how far it's already come. Take the Mars Curiosity rover.

"It is remarkable. It's absolutely incredible," enthuses AI expert Lindsay. "It certainly represents intelligence." No other biological organism on earth except man could have done what it has done, he says. But at the same time, "it doesn't understand what it is doing in the sense that human astronauts [would] if they were up there doing the same thing," he says.

Will machines ever exhibit that kind of humanlike intelligence, including self-awareness (which, ominously, brought about a "mental" breakdown in the AI system HAL in the classic sci-fi movie "2001: A Space Odyssey")?

"I think we've passed the Turing test, but we don't know it," argued Pat Hayes, a senior research scientist at the Florida Institute for Human and Machine Cognition in Pensacola, in the British newspaper The Telegraph recently. Think about it, he says. Anyone talking to Siri in 1950 when Turing proposed his test would be amazed. "There's no way they could imagine it was a machine – because no machine could do anything like that in 1950."

But others see artificial intelligence remaining rudimentary for a long time. "Common sense is not so common. It requires an incredible breadth of world understanding," says iRobot's Angle. "We're going to see more and more robots in our world that are interactive with us. But we are a long way from human-level intelligence. Not five years. Not 10 years. Far away."

Even MIT's Winston, a self-described techno-optimist, is cautious. "It's easy to predict the future – it's just hard to tell when it's going to happen," he says. Today's AI rests heavily on "big data" techniques that crunch huge amounts of data quickly and cheaply – sifting through mountains of information in sophisticated ways to detect meaningful relationships. But it doesn't mimic human reasoning. The long-term goal, Winston says, is to somehow merge this "big data" approach with the "baby steps" he and other researchers now are taking to create AI that can do real reasoning.

Winston speculates that the field of AI today may be at a place similar to where biology was in 1950, three years before the discovery of the structure of DNA. "Everybody was pessimistic, [saying] we'll never figure it out," he says. Then the double helix was revealed. "Fifty years of unbelievable progress in biology" has followed, Winston says, adding: AI just needs "one or two big breakthroughs...."

• Carolyn Abate in San Francisco and Kelcie Moseley in Pullman, Wash., contributed to this report.