What is Facebook's responsibility when people broadcast crimes?

Loading...

The apparent sexual assault of a Chicago teenager, broadcast on Facebook Live, has reignited debate about the feature and Facebook’s responsibility to address crimes.

On Sunday afternoon, a girl disappeared after being dropped off near her Chicago home. When she didn’t come home on Sunday, her mother filed a police report. It was not until Monday, however, that her family learned that an apparent sexual assault on the girl had been broadcast on Facebook Live. None of the 40 viewers had reported the incident through Facebook’s content-review system, but a teenager mentioned the video to Reginald King, one of the girl’s relatives. After the police saw screen grabs of that video, they launched an investigation. The girl was reunited with her mother on Tuesday.

For many observers, the most troubling aspect of the alleged assault is that no one reported it, either to Facebook or to the police. If one person had reported the incident, Facebook would have reviewed the video and might have been able to take it down sooner. And it raises the question of how to prevent similar abuses of the platform from occurring in the future.

"It hurts me to my core because I was one of the last people to see her [before the assault]," Mr. King told the Chicago Tribune. "I want to make sure this never happens to anybody else's kids."

This is not the first time that Facebook’s fledgling Live feature has documented abuse. In January, four people were arrested after broadcasting a video that showed them taunting and beating a man with special needs in Chicago. And last summer, the platform bore witness to the shooting death of Philando Castile, a black man, by a police officer in Minnesota.

Facebook takes its "responsibility to keep people safe on Facebook very seriously," Facebook spokeswoman Andrea Saul told the Associated Press. "Crimes like this are hideous and we do not allow that kind of content on Facebook."

In response to concerns about inappropriate content, particularly on Facebook Live, the tech giant has an around-the-clock content management team. The Facebook community is asked to flag any posts that they think are inappropriate, and the team then looks at all flagged content to decide whether it should be taken down.

In this case, however, that didn’t happen – because no one ever flagged the post for review.

Technically, that isn’t Facebook’s problem. But the tech giant, which is increasingly aware of its social responsibility, may be motivated to find a solution.

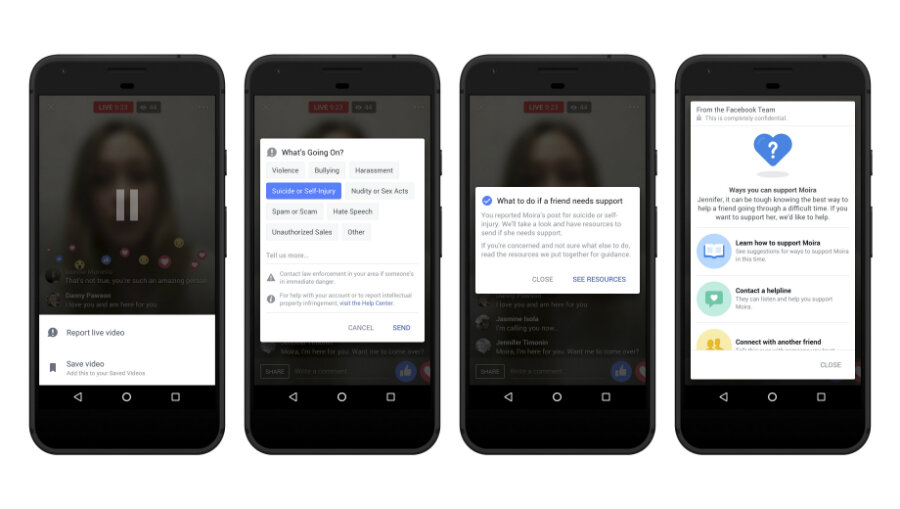

To help address Facebook Live suicides, for instance, the company introduced new prevention tools that offer users help in real time. Offering practical steps to dissuade those considering taking their own lives – and help those those concerned about a loved one – is a significant step forward. Facebook may find a similar way to encourage users to speak up and connect them directly with local authorities.

Some say the Facebook Live violence points to a challenge that society as a whole must confront.

“As a society we have to ask ourselves, how did it get to the point where young men feel like it’s a badge of honor to sexually assault a girl ... to not only do this to a girl, but broadcast it for the world?” King asked.

This report contains material from the Associated Press.