What comes after silicon?

Loading...

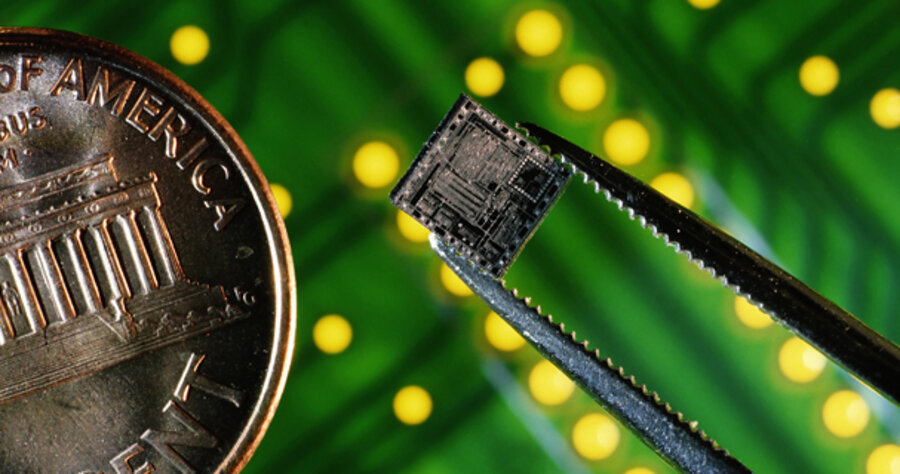

The heart of every computer made today is an integrated circuit (or “chip”) largely made of silicon. This common element, which makes up a quarter of the earth’s mass, can be found in such mundane items as beach sand and window glass. But in computer chips, silicon has had its brightest hour, powering a technological revolution that changed the world as much as the steam engine or the assembly line.

Using silicon, engineers have been able to pack more punch onto the same size chip, doubling the number of components on a given piece of silicon roughly every two years.

But soon the industry will hit a wall, scientists say. Silicon chips can only be stretched so thin. And as the individual components on a chip get smaller, engineers are reaching the bounds of what’s physically possible. Could silicon’s reign in the computer industry be drawing to a close?

“The real magic of integrated circuit technology has been that we can increase the density while reducing the cost,” explains Craig Sander, corporate vice president of technology development for Advanced Micro Devices (AMD), a chipmaker in Sunnyvale, Calif.

Chipmakers have pulled this off by figuring out new ways to cram more and smaller transistors onto a single chip. Transistors are basically tiny electrical switches and are the reason that computers use binary code – the 1s mean “on” and 0s mean “off.”

“By setting them up in different arrangements, engineers create a circuit that can store a value (for example, inside a memory chip) or perform a calculation (that could be used in a microprocessor),” says Mr. Sander. “The result is you get more for less, because we can so efficiently increase the density of transistors on a chip.”

This trend, first predicted by Intel founder Gordon Moore in 1965, has produced modern computers that are enormously more powerful than their early predecessors. The constant doubling of power every two years was soon termed “Moore’s Law.”

Design, on an atomic level

Intel’s first microprocessor, produced in 1971, had 2,300 transistors on it, according to Mark Bohr, director of process architecture and integration and a senior fellow at Intel. The company’s latest chips have about 2 billion transistors.

Until recently, the smallest transistor that could be placed on a chip was 65 nanometers (nm) across. That’s 400,000 times smaller than as inch. To try to put this in perspective, if you took the latest Intel Core2 Duo chip (144 square millimeters in size) and blew it up to the size of Colorado, the 65 nm transistor would be only 9 feet across. But to keep Moore’s Law humming, even 65 nm wasn’t enough.

Companies are now producing chips based on 45 nm devices, but as transistors get smaller and smaller, the laws of physics loom larger and larger. Sander points out that at the scales chipmakers are now working, objects that we consider to be infinitesimally small start to become significant factors.

“All of the physical features that form transistors or the connections between transistors are made up of atoms and molecules,” he says. “These atoms and molecules are the fundamental building blocks and their dimensions just cannot be reduced. As transistors or their components continue to get smaller, we will reach a point where the placement of individual atoms will affect their behavior.”

Chipmakers at Intel have already had to face this problem, says Mr. Bohr. For a chip to work correctly, the thickness of its silicon layers needs to shrink proportionally to the length and width. For example, at the 90 and 65 nm horizontal sizes, the “gate oxide” layer, which acts as an electrical insulator between conductive layers, is only 1.2 nm thick (about 2 inches in our Colorado-size version). This is roughly the thickness of five individual atoms, according to Bohr.

The problem was that at 45 nm, the gate oxide would have to be even thinner – so thin that electrons would start tunneling through it, ruining its properties as an insulator. Intel worked around this problem by using a new layer based on the element hafnium.

Looking beyond silicon

There have been recent discussions that more esoteric forms of computing technologies might provide a breakthrough to keep Moore’s Law alive.

Optical computing, which would use photons rather than electrons, is one idea. But both Bohr and Sander agree that optical technology works best to connect processors together over a distance, rather than inside the chips themselves.

Another contender is quantum computing, which uses the attributes of elementary particles such as electrons as the basis for calculation.

As opposed to traditional digital computing, where a bit of data is either a 1 or a 0, in quantum computing it can be both at once. Again, neither Bohr or Sander sees quantum computing having much utility except in some specialized areas such as cryptography, at least in the short term.

The good news for Moore’s Law is that it seems healthy for at least another decade. Intel’s Bohr expects at least another 10 years of biannual doubling, while Sander sees innovations on the horizon that could keep the trend on track through 2020. AMD is already developing new technology needed for 16 nm transistors, which is on their road map for 2014.

And beyond that? “The industry is now looking for some new physics,” says Sander. “We have used what we call ‘charge-based physics’ since the days of vacuum tubes. Now the Nanoelectronics Research Initiative, of which AMD is a member, is sponsoring ... university research to find new physical-switching mechanisms that don’t require the movement of [an] electronic charge. It is too soon to tell, but this is the kind of work that could allow Moore’s Law to continue well beyond 2020.”