When the Internet breaks, who ya gonna call?

Loading...

At this point, it’s hard to imagine life without the Internet, at least in the developed world. But buried underneath the breathtaking Web applications and streaming media that we use on a daily basis, the actual software that makes the Internet work is starting to show its age.

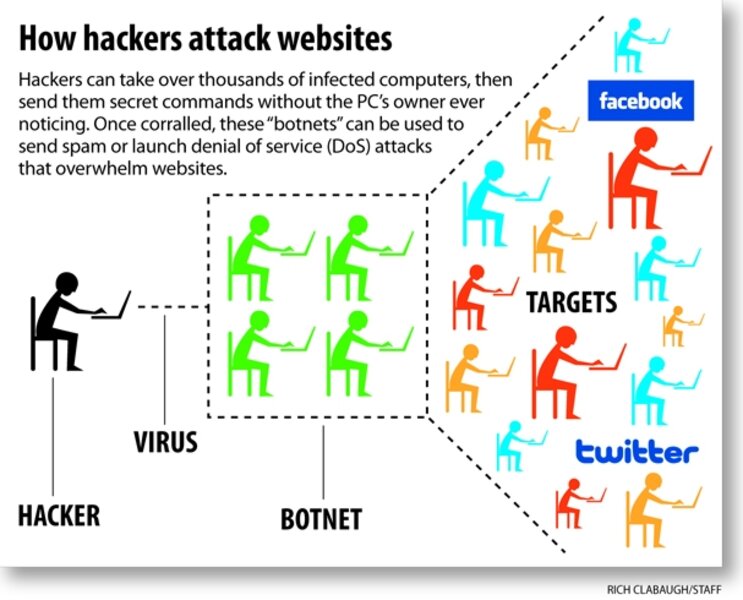

As recent events have demonstrated all too clearly, the Internet is especially vulnerable to deliberate attacks. Massive networks of hijacked computers, known as “botnets,” can be used to deluge target websites with enough traffic to essentially shut them down, much as a radio station running a call-in contest will have a constantly busy phone number. These attacks succeed, at least partially, because they are able to exploit weaknesses in the existing Internet protocols.

Twitter, the social-networking site with millions of users, was the victim of just such a denial of service (DoS) attack in early August. There is much speculation that the attack was triggered by the postings of a Georgian separatist, but whatever the root cause, all it took was a few keystrokes to unleash a botnet’s fury against Twitter, taking it down for half a day.

Like a jazzy sports car that has never had its oil changed, the underlying protocols of the Internet have remained largely unchanged since it came into being in the mid-1980s. The Internet can be surprisingly fragile at times and is vulnerable to attack.

The Internet evolved from the experimental military ARPAnet project, where technical decisions were made by consensus among the researchers involved. When consensus was reached, changes were made throughout the entire network. As it became clear that there was interest in the uses of the Internet beyond the limited research community it encompassed, the military (and later the National Science Foundation, who inherited the Internet) opened it up gradually to commercial traffic.

The Internet grew too big too fast, says John Doyle, professor of electrical engineering at the California Institute of Technology in Pasadena, Calif.

“The original was just an experimental demo, not a finished product,” he says. “And ironically, [the originators] were just too good and too clever. They made something that was such a fantastic platform for innovation that it got adopted, proliferated, used, and expanded like crazy. Nothing’s perfect.”

Rather than create a more robust network using the lessons we learned from the ARPAnet and early days of the Internet, we’ve instead been patching it up for the past 2-1/2 decades, Dr. Doyle says.

Unfortunately, the spirit of trust that had typified the ARPAnet and early Internet doesn’t hold up so well today. Many of the underlying computer protocols assume that everyone is an honest player, and increasingly there have been incidents where malicious parties have exploited this trust for their own purposes.

A glaring example is “DNS poisoning.” The domain name system (DNS) is the part of the Internet responsible for turning a name such as CSMonitor.com into the Internet version of a street address, which in this case is 66.114.52.47.

Because DNS servers trust one another, it is possible for a wily wrongdoer to convince the computer to start providing the wrong number for a name and send Web surfers to the wrong website, perhaps a malicious one. That’s probably not a catastrophe when it’s CSMonitor.com, but potentially devastating if it’s BankOfAmerica.com.

Vinton Cerf, widely considered the “father of the Internet,” believes that this problem can be reduced by using cryptography to validate DNS records – but it will take time and maybe a little strong-arming to update the world’s Internet hubs.

Mr. Cerf also thinks that increased use of cryptographic authentication can also help with other areas, such as spam e-mail. Yet to Doyle, it is just another example of the patchwork fixes that he says epitomize the Internet.

The Internet can also suffer problems due to human error, sometimes with frustrating results.

When Pakistan tried to ban the video-sharing site YouTube in February 2008, the government mistakenly told computers around the world that Pakistan was now the best route to the site. As a result, every YouTube video started flowing through Pakistan’s relatively anemic data pipeline, causing a huge bottleneck and preventing most people from getting to the site at all.

The last time the Internet had any kind of a major upgrade was in 1985 and 1986, when the government agency overseeing the ARPAnet started to transition it from a military project to a more general research network.

At that time, a switch was made from the existing networking address system, which could only accommodate a couple of hundred computers, to the Internet Protocol version 4 (IPv4) that we still use today for more than 4 billion possible addresses.

This move marks the last time that the Internet had a mandated, coordinated change.

Unlike the nation’s airwaves, which are controlled by the FCC, the Internet has no governing body with ultimate say on its operation. So there is no ability to require a change, such as we saw earlier this year with the switch from analog to digital television.

The closest thing that the Internet has to an owner is the Internet Engineering Task Force (IETF), an advisory organization that promotes new protocols and researches fixes to vulnerabilities in the Internet infrastructure.

But because the Internet is really composed of hardware and software owned and maintained by millions – if not billions – of companies, governments, and individuals, there is no way to just “flip a switch.”

One glaring example of this weakness is the rapidly diminishing availability of those 4 billion Internet addresses. The IPv4 system may have seemed sufficient in 1985, but with China, Brazil, and India quickly connecting more people to the Web, the supply is rapidly running out.

A solution has existed for close to a decade: IPv6, which uses address numbers that are four times longer and would probably never run out. In fact, under IPv6, there would be enough addresses for every person on the planet to have eight for each atom in their body.

Vinton Cerf believes that a move to IPv6 is critical. “[We need to implement] IPv6, so as to be ready for the run out of IPv4 sometime around 2011.”

But as Doyle points out, there is little financial incentive for the companies that provide Internet service to consumers and businesses to upgrade, because it doesn’t generate additional revenue.

He claims that basic work on improving fundamental network infrastructure has taken a back seat in our culture to the development of flashy new Internet applications, such as Twitter and Facebook.

This opinion seems to be proved true by the slow adoption of IPv6.

In spite of the fact that all major computer-operating systems and network hardware have supported IPv6 for years, it is almost impossible to get an IPv6 address from an Internet service provider.

In a written response, Jean McManus, executive director for Verizon Network & Technology states in regard to IPv6 that “it is an important development that we are actively working, but Verizon has nothing to announce at this time.”

A notable exception is Comcast, which is testing residential IPv6 service with plans to roll it out generally in 2010.

Doyle thinks that what is really needed is a from-the-ground-up redesign of the underlying Internet architecture.

“To the extent I’ve been working in this field for the last 10 years, I’ve been mostly working on band-aids. I’m really trying to get out of that business and try to help the people, the few people, who are really trying to think more fundamentally about what needs to be done.”

He speculates that such a new network could coexist with the current Internet. In fact, he says, what we know today could eventually become just a small piece of a new, more secure network.

But Doyle says that there’s a lot of basic research that needs to be done before that could happen.