Why tech giants are forming an AI coalition

Loading...

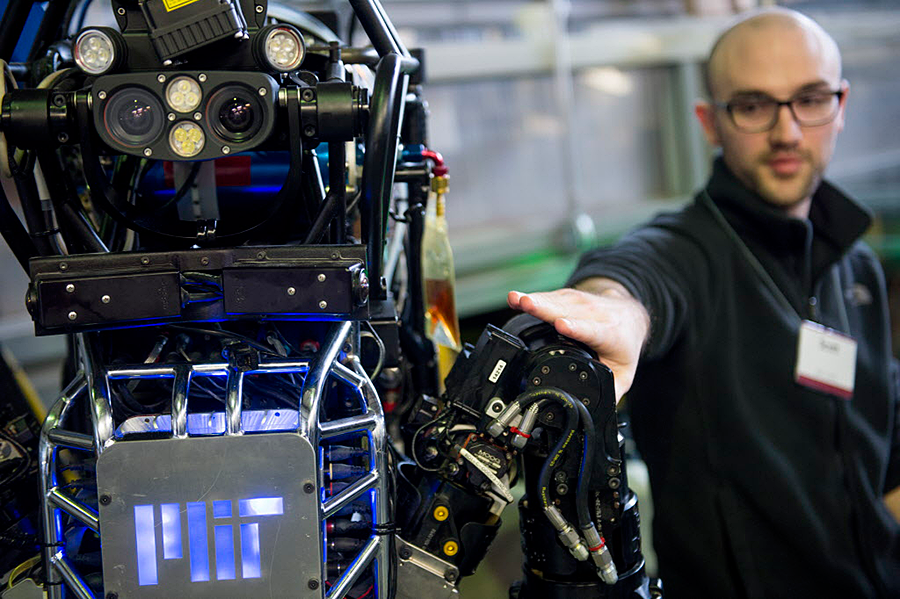

If science fiction is a reflection of our fears, then we are afraid of a robot takeover.

To counter fears about artificial intelligence replacing humans at work and at home, as well as agree on industry-wide ethics for the growing technology, five of the world's largest tech companies have joined together.

“The Partnership on Artificial Intelligence to Benefit People and Society” was created by Amazon, Facebook, Google, Microsoft, and IBM, they announced in a statement Wednesday. Academics, nonprofit researchers, and experts in policy and ethics will also be included. Apple is also in talks with the partnership, but has not yet decided if it will join, according to The Verge.

Once reserved for sci-fi dystopias, artificial intelligence has become a daily reality in the internet era, powering Apple's Siri, chatbots on Facebook Messenger, and even web searches.

It hasn’t been all good. Twitter Tay, a chatbot Microsoft introduced to Twitter, started to rattle off offensive Tweets less than 24 hours after it went online, and the Tesla Autopilot feature and the driver behind the wheel both failed to see a tractor-trailer before it was too late, resulting in a fatal crash in Florida in May.

The partnership has two goals: educate the public about AI and recommend industry-wide ethics and best practices, such as the role of machine intelligence in life-threatening situations like warfare, healthcare, and transportation.

“The reason we all work on AI is because we passionately believe its ability to transform our world,” Mustafa Suleyman, on of the founders of Google DeepMind and a chair of the Partnership on AI, said in a call with the media Wednesday. “The positive impact of AI will depend not only on the quality of our algorithms, but on the amount of public discussion … to ensure AI is understood by – and benefits – as many people as possible.”

Subbarao Kambhampati, a computer science professor at Arizona State University and president of the Association for the Advancement of Artificial Intelligence (AAAI), said the coalition is timely and needed.

"There have been situations already where companies had to essentially make up their own best practices," he told NPR. "Most of the times, things work out well, but sometimes they haven’t."

Dr. Kambhampati and AAAI will have a seat on the coalition.

In addition to the Tesla crash in May, and snafus by Microsoft’s Twitter Tay and Google’s facial recognition software, there has been fearful speculation robots will replace humans in much of the workplace.

Robert Reich, the former secretary of Labor and a current professor at UC-Berkeley, wrote about this fear in 2015. Kodak’s workforce, writes Professor Reich, peaked in 1988 at 145,000 employees. When Kodak filed for bankruptcy in 2012, Instagram was serving 30 million customers with just 13 employees.

New technologies aren’t just labor-replacing. They’re also knowledge-replacing. The combination of advanced sensors, voice recognition, artificial intelligence, big data, text-mining, and pattern-recognition algorithms, is generating smart robots capable of quickly learning human actions, and even learning from one another. If you think being a “professional” makes your job safe, think again.

In January 2015, entrepreneur and Tesla founder Elon Musk donated $10 million to the Future of Life Institute (FLI) to “mitigate existential risks racing humanity” – including AI.

But industry leaders have been tackling these "existential risks" for years. In addition to this partnership, Google, Facebook, Microsoft, and IBM are also on a Stanford University coalition, the “One Hundred Year Study on Artificial Intelligence,” known as AI100, which is examining how AI will enter our lives in the next 100 years.

"Building advanced AI is like launching a rocket,” said Jaan Tallinn, co-founder of FLI and Skype, in 2015. “The first challenge is to maximize acceleration, but once it starts picking up speed, you also need to focus on steering."