Shutterstock's reverse image search promises a gentler side of AI

Loading...

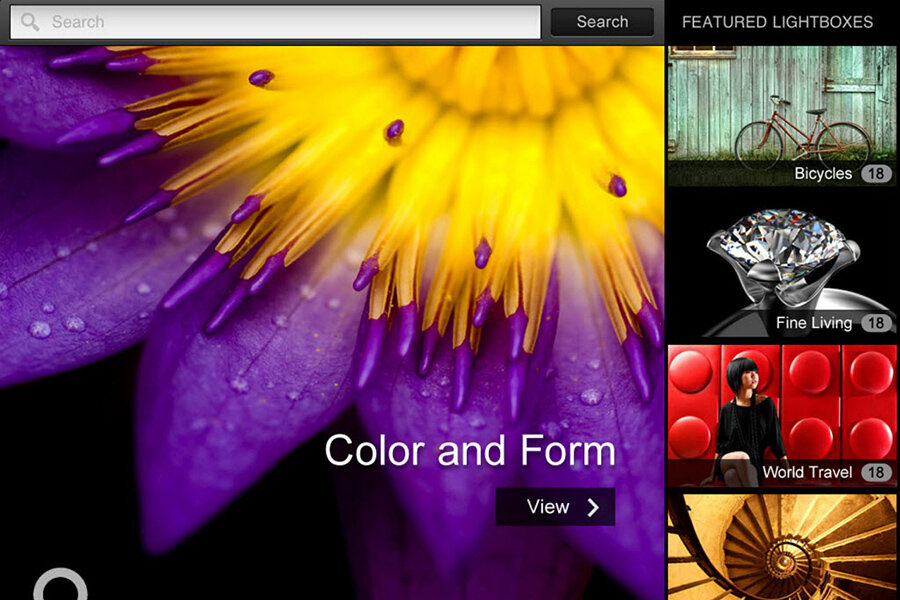

For designers and photographers, selecting and laying out photos is often subjective, requiring a keen sense of color and composition.

Using a computer algorithm, the stock footage site Shutterstock hopes to make that process easier. It now offers a reverse image search tool that analyzes the pixels in a photo and returns images that are similar in “look and feel” to the original without requiring a user to type in keywords to search.

Dragging a photo of a stained-glass cathedral window into the search box, the company demonstrates in a video, produces a series of related images that more closely match the original in color and composition.

The new search engine works by using a customized convolutional neural network, a type of machine learning tool that is modeled on how the brain’s visual cortex, especially that of animals, processes images.

While much attention has focused on the large-scale benefits – and possibly the harms – of artificial intelligence, researchers have found that neural networks are particularly adept at tasks humans may take for granted, such as recognizing subtle features in a photo.

In what’s known as computer vision, a computer analyzes an image and breaks it down into its primary characteristics, such as colors or shapes, which are then represented in a series of numbers that can be analyzed.

The process is still far from the capabilities of human vision, but it can have a number of uses.

Google and other tech companies have used computer vision techniques to create a tool to pick the best YouTube thumbnail, or to “learn” to recognize an image of a cat without any previous knowledge of what a cat looked like.

Shutterstock says its reverse image search marks a large-scale improvement over its previous search engine, which worked by analyzing keywords attached to each image by people who uploaded photos to the site.

“That keyword data, while useful for indexing images into categories on our site, wasn’t nearly as effective for surfacing the best and most relevant content,” says Kevin Lester, vice president of engineering at the company, in a blog post. “So our computer vision team worked to apply machine learning techniques to reimagine and rebuild that process."

The neural network has now examined 70 million images and 4 million video clips in its collection. Soon, the company says, it hopes to roll out a video search feature.

“As the technology continues to learn and recognize what's inside an image or a clip, it promises more possibilities,” says Shutterstock chief executive and founder Jon Oringer in a statement. “We know we've only scratched the surface in how we use this deep machine learning to better understand and serve our customers.”