Islamic State militants turn to alternative social network in wake of ban from Twitter

Loading...

In the wake of bans from traditional social media sites like Twitter, members of the militant group the Islamic State have turned to a smaller, more diffuse platform called Diaspora.

"Various newspapers have reported that members of the Islamic State (IS) have set up accounts on diaspora* to promote the group's activities," reads a Wednesday blog post on Diaspora. "In the past, they have used Twitter and other platforms, and are now migrating to free and open source software (FOSS)."

Different from mainstream social media, Diaspora has no central server. Instead, the decentralized network relies on a series of smaller servers, each of which is responsible for moderating the content on its respective site. And because Diaspora is an open-source initiative, meaning people use the software however they choose, the core members of the Diaspora administrative team do not have a unified way of removing unwanted content from each individual node in the network, or what Diaspora calls a "pod."

That means the administrators, regardless of their philosophy for running their site, cannot exert direct influence over what gets posted and taken down from individual pods.

"This may be one of the reasons which attracted IS activists to our network," the post reads.

The Diaspora team emphasizes it does not want IS content on its servers. It says it has accumulated a list of IS-related accounts and that it has been speaking with individual pod administrators, known as "podmins," urging them to remove accounts and posts related to IS.

Still, it admits there is no fail-safe way to remove this content due to the open-source nature of the network.

"We will continue our efforts to talk with the podmins, but we want to emphasize once again that the project's core team is not able to decide what podmins should do," the post adds. Though in an e-mail, Diaspora spokesman Dennis Schubert said he is "currently not aware of any active user accounts sharing IS related content on diaspora*."

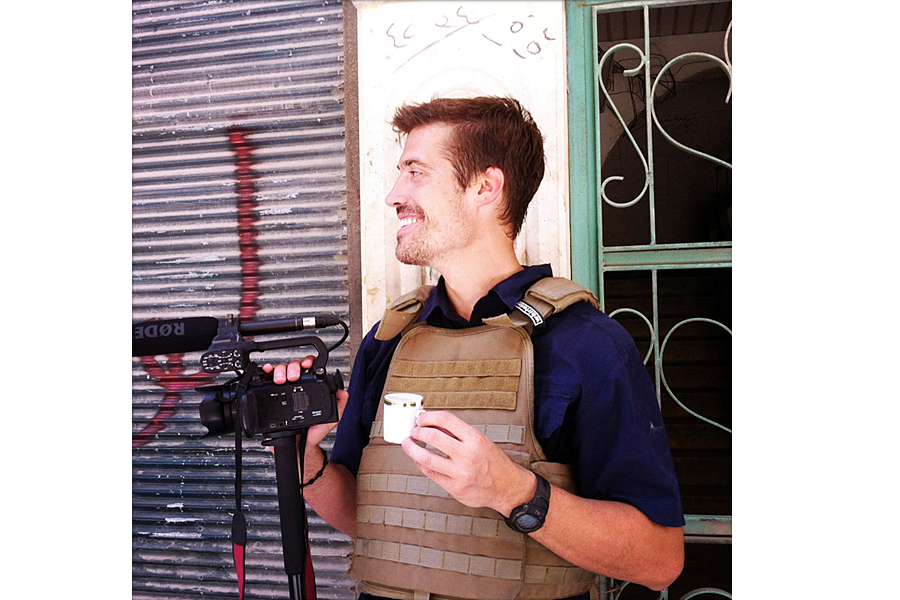

This follows the circulation, and subsequent removal, earlier this week of a gruesome video on social media sites that showed the beheading of American journalist James Foley, who was executed by IS.

The video was widely shared on Twitter and could be easily found on YouTube.

Soon thereafter, Twitter said it was suspending accounts that posted the video. YouTube took it down and has an algorithm in place to stop it from being uploaded again. Twitter also announced a new policy stating that family members could petition to have images of deceased family members removed from the site.

Similarly, in the ongoing conflict between Israel and Gaza, part of which has played out in a media war between the Palestinian militant group Hamas and the Israeli Defense Forces, Facebook and Twitter have suspended several Hamas accounts. Twitter also shut down Hamas accounts during the fighting that broke out between Israel and Gaza in 2012.

Officially, Twitter's rules state that it "does not screen content and does not remove potentially offensive content." But it prohibits the publication of violent or pornographic imagery as well as threats of violence against others.

According to Twitter's Terms of Service, users are prohibited from using the site if they are "a person barred from receiving services under the laws of the United States or other applicable jurisdiction." This includes a group like Hamas, classified by the American government as a terrorist organization, preventing it from accessing US commercial products.

Facebook, too, prohibits classified terrorist groups from participating on its social network.

"We don’t allow terrorist groups to be on Facebook," Facebook spokesman Israel Hernandez, said in a July interview with The Christian Science Monitor. "There are no pages of Hamas on Facebook."

Neither Twitter nor Facebook responded to requests seeking comment for this article.

And yet, when Twitter functions as a crucial tool for disseminating the news, the practice of removing newsworthy content raises questions of censorship as accounts are suspended for even posting content related to militant groups like Hamas and IS. Moreover, some argue that following the social-media trails of these groups can be a powerful tool for understanding and countering their operations.

More broadly, this practice of removal raises an ethical question: Do sites like Twitter, a public company, run operations that conflict with their important journalistic roles?

"Twitter is not a journalism site and thus it does not declare a set of journalistic ethics," says David Weinberger, a senior researcher at Harvard University's Berkman Center for Internet & Society. He says that while he believes a private site has every right to moderate its content to foster the kind of online conversation it wants, a site like Twitter has a reach so vast and so influential that it needs to consider guidelines beyond its own self-interest. Specifically, he says Twitter has a responsibility to follow journalistic norms that include leaving potentially disturbing content on its platform in order to benefit the public interest.

"[Militant groups] are a case when [a site like Twitter] is torn between having the sort of control over its content that a small site exercises through its rules of the pool," he says. "On the one hand, it wants to have the sorts of rules that will enable the sorts of conversation it was designed for and on the other hand it's gotten so big that it fulfills some of the journalistic guidelines as well."

He adds: "It's difficult to know what guidelines apply."