AI in the real world: Tech leaders consider practical issues.

Loading...

The discussion on artificial intelligence has been flooded with concerns of “singularity” and futuristic robot takeovers. But how will AI impact our lives five years from now, compared to 50?

That’s the focus of Stanford University’s One Hundred Year Study on Artificial Intelligence, or AI100. The study, which is led by a panel of 17 tech leaders, aims to predict the impact of AI on day-to-day life – everywhere from the home to the workplace.

“We felt it was important not to have those single-focus isolated topics, but rather to situate them in the world because that’s where you really see the impact happening,” Barbara Grosz, an AI expert at Harvard and chair of the committee, said in a statement.

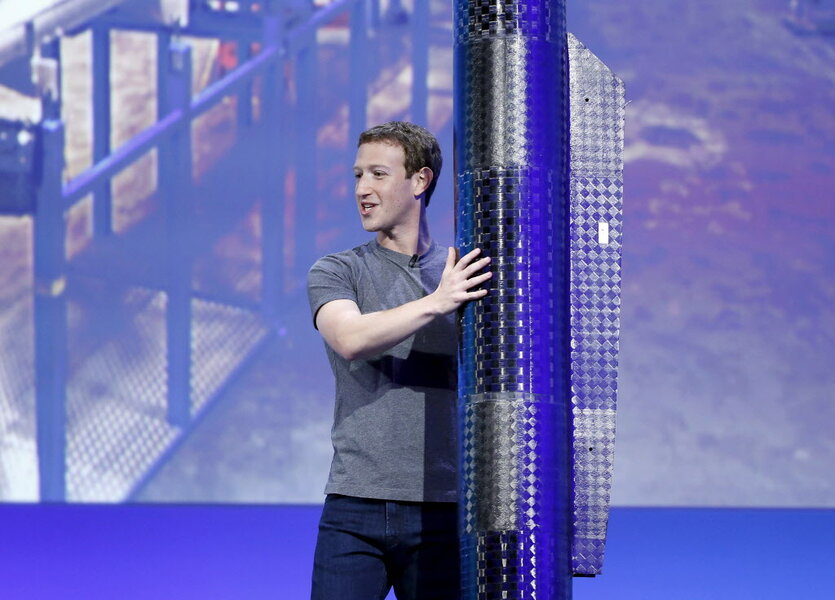

Researchers kicked off the study with a report titled “Artificial Intelligence and Life in 2030,” which considers how advances like delivery drones and autonomous vehicles might integrate into American society. The panel – which includes executives from Google, Facebook, Microsoft and IBM – plans to amend the report with updates every five years.

For most people, the report suggests, self-driving cars will be the technology that brings AI to mainstream audiences.

“Autonomous cars are getting close to being ready for public consumption, and we made the point in the report that for many people, autonomous cars will be their first experience with AI,” Peter Stone, a computer scientist at the University of Texas at Austin and co-author of the Stanford report, said in a press release. “The way that is delivered could have a very strong influence on the way the public perceives AI for the years coming.”

Stone and colleagues hope that their study will dispel misconceptions about the fledgling technology. They argue that AI won’t automatically replace human workers – rather, that they will supplement the workforce and create new jobs in tech maintenance. And just because an artificial intelligence can drive your car, doesn’t mean it can walk your dog or fold your laundry.

“I think the biggest misconception, and the one I hope that the report can get through clearly, is that there is not a single artificial intelligence that can just be sprinkled on any application to make it smarter,” Stone said.

The group has also considered regulation. Given the diversity of AI technologies and their wide-ranging applications, panelists argue that a one-size-fits-all policy simply wouldn’t work. Instead, they advocated for increased public and private spending on the industry, and recommended increased AI expertise at all levels of government. The group is also working to create a framework for self-policing.

“We’re not saying that there should be no regulation,” Stone told The New York Times. “We’re saying that there is a right way and a wrong way.”

But there are other issues, some even trickier than regulation, which the study has not yet considered. AI applications in warfare and “singularity” – the notion that artificial intelligences could surpass human intellect and suddenly trigger runaway technological growth – did not fall within the scope of the report, panelists said. Nor did it focus heavily the moral status of artificially intelligent agents themselves.

No matter how “intelligent” they become, AI’s are still based on human-developed algorithms. That means that human biases can be infused in a technology that would otherwise think independently. A number of photo apps and facial recognition softwares, for example, have been found to misidentify nonwhite people.

“If we look at how systems can be discriminatory now, we will be much better placed to design fairer artificial intelligence,” Kate Crawford, a principal researcher at Microsoft and co-chairwoman of a White House symposium on society and AI, wrote in a New York Times op-ed. “But that requires far more accountability from the tech community. Governments and public institutions can do their part as well: As they invest in predictive technologies, they need to commit to fairness and due process.”

As it turns out, there are already groups dedicated to tackling these ethical concerns. LinkedIn founder Reid Hoffman has collaborated with the Massachusetts Institute of Technology Media Lab, both to exploring the socioeconomic effects of AI and to design new tech with society in mind.

“The key thing that I would point out is computer scientists have not been good at interacting with the social scientists and the philosophers,” Joichi Ito, the director of the MIT Media Lab, told The New York Times. “What we want to do is support and reinforce the social scientists who are doing research which will play a role in setting policies.”