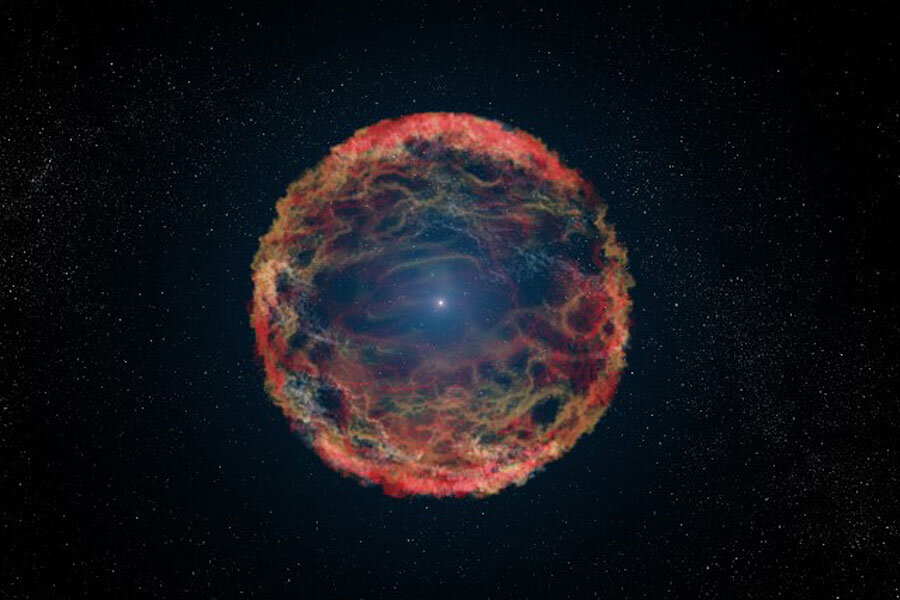

Spectacular supernova may have helped usher in mass extinction

Loading...

A faraway supernova explosion may have contributed to a minor mass extinction here on Earth 2.59 million years ago, a new study suggests.

Fast-moving, charged particles called cosmic rays that were blasted out by a supernova may have played a role in the climatic changes that apparently led to a die-off at the end of the Pliocene epoch and the start of the Pleistocene, researchers said.

"Africa dried out, and a lot of the forest turned into savannah. Around this time and afterwards, we started having glaciations — ice ages — over and over again, and it's not clear why that started to happen," study co-author Adrian Melott, of the University of Kansas, said in a statement. "It's controversial, but maybe cosmic rays had something to do with it." [Wipe Out: History's Most Mysterious Extinctions]

Melott and his colleagues — led by Brian Thomas, of Washburn University in Kansas — conducted computer simulations that modeled how supernovas might affect Earth's climate and biosphere. (Supernovas can occur in two ways: when a star much more massive than the sun runs out of fuel and dies, or when a superdense stellar corpse called a white dwarf steals so much matter from a nearby companion star that it crosses a mass threshold and explodes.)

The researchers were particularly interested in supernovas that occur about 300 light-years from Earth, because scientists think such events flared up twice relatively recently — once 6.5 million to 8.7 million years ago, and again 1.7 million to 3.2 million years ago. (This latter supernova is the one that may be tied to the Pliocene-Pleistocene extinction.)

"I was expecting there to be very little effect at all," Melott said, noting that 300 light-years is not very close.

The results were therefore surprising. The team's simulations suggest, for example, that such supernovas bathed the night sky in blue light so bright that it disrupted animals' sleep patterns for weeks. And, more importantly, a surge of radiation probably hit organisms on land and in the shallower parts of the ocean.

"The big thing turns out to be the cosmic rays," Melott said. "The really high-energy ones are pretty rare. They get increased by quite a lot here — for a few hundred to thousands of years, by a factor of a few hundred. The high-energy cosmic rays are the ones that can penetrate the atmosphere. They tear up molecules. They can rip electrons off atoms, and that goes on right down to the ground level. Normally, that happens only at high altitude."

The end result was likely a tripling of the overall radiation dose at ground level, the researchers found. This may have been enough to increase cancer and mutation rates, "but not enormously," Melott said. "Still, if you increased the mutation rate, you might speed up evolution."

This radiation may also have affected Earth's climate, the study said. The team's simulations suggest that the increased flux of cosmic rays caused many of the atoms and molecules in the lowest layer of Earth's atmosphere (known as the troposphere) to acquire a positive or negative charge.

This "tropospheric ionization" likely lasted for at least 1,000 years, the researchers said.

"It is possible that this could trigger climate change, especially if instability was already present," they wrote in the new study, which appears in The Astrophysical Journal Letters. (You can read it for free at the online preprint site arXiv.org here.)

The Pliocene-Pleistocene extinction was quite a minor one as far as these events go. Previous studies have suggested that a supernova would have to be quite close to Earth — within 25 light-years or so — to trigger a major mass extinction such as the one 65 million years ago that marked the end of the age of dinosaurs.

Follow us @Spacedotcom, Facebook or Google+. Originally published on Space.com.

Editor's Recommendations

- Supernova Remnant 'Dissected' By Astronomy Research Center | Visualization

- Supernova Photos: Great Images of Star Explosions

- The Reality of Climate Change: 10 Myths Busted

Copyright 2016 SPACE.com, a Purch company. All rights reserved. This material may not be published, broadcast, rewritten or redistributed.