Hospital shares patient scans with Google: Can they do that?

Loading...

An eye hospital in London is entering the debate over patient privacy by sharing images of patients' retinas with a Google-owned artificial intelligence project.

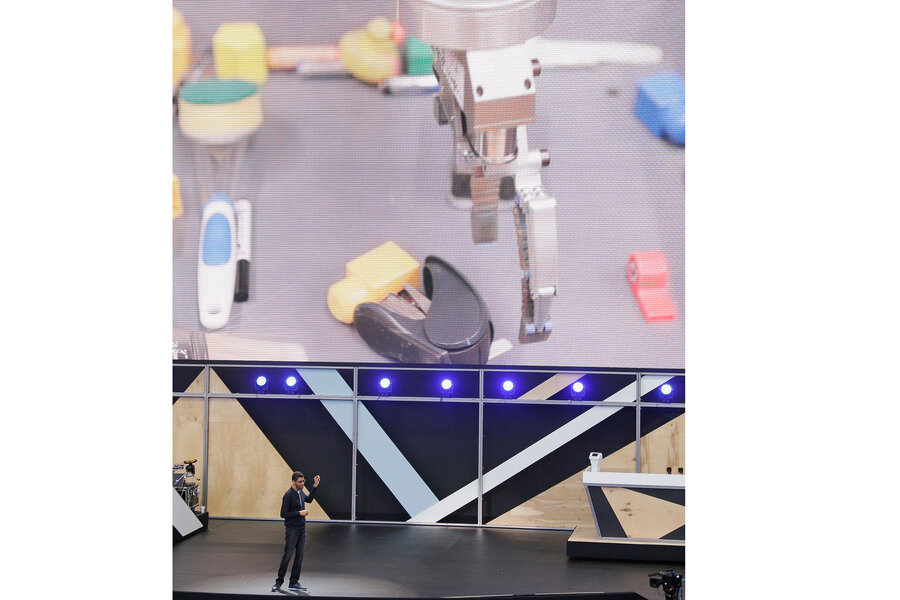

Moorfields Eye Hospital is anonymizing and then sharing patient information with DeepMind, a machine-learning AI company that plans to use the hospital's non-invasive retina scans to train its machines, which must scan thousands and thousands of images to "learn" how an eye should look.

While patients consented to general research, privacy advocates have expressed concerns they may not have realized the extent to which their personal information – even scans of their eyes – would be handed over to an outside party such as Google.

The hospital has taken an important first step by informing patients before the project begins, which is likely a lesson learned from a previous medical research project in Britain. In a previous data-sharing partnership between DeepMind and three other London hospitals, patients discovered the involvement of their data only haphazardly afterward, the BBC reported.

Researchers are learning that both informing patients ahead of time and letting them opt out is an essential step in maintaining privacy protection, says Lysa Myers, a security researcher at the software company ESET.

"There is a lot of potential good to be gained in analyzing medical data, but we just really need to be conscious of making people aware of what is going on, so everyone can participate at a level they feel comfortable with," Ms. Myers tells The Christian Science Monitor.

The hospital has also promised the patient data will be encrypted and stored by a secure third party, which Myers says is another nod to increased security.

Google has also sought the support of several British charities that welcome the possible progress for medical research to add credibility to the research. The catch is that AI programmers need real patient records to train the machines, and the hospital is sharing 1 million eye scans, along with anonymous info on patient diagnoses, treatments, and age. Researchers say the project can both speed up eye diagnoses and further the development of AI, but privacy advocates insist hospitals should ask before offering patient data to Google.

But Rory Cellan-Jones, a BBC correspondent who has visited Moorfields hospital for 10 years, suggested that while he is willing to sacrifice his privacy to the cause of medical research, not everyone shares the sentiment – which is why patients need to understand specifically how their data is being used and how and with whom it is being shared.

"Now, I can imagine that some people who would be generally happy to see their data used in research will still be uneasy about it going to Google, whatever the guarantees of anonymity," he wrote. "Patients can opt out – but that would mean withholding data from all projects, rather than just this one."

The hospital says that anonymous data-sharing falls within health laws, provided only the engineers and scientists working on the project can access the images.

The promise of anonymity has not satisfied everyone, as technology journalist Gareth Corfield said on Twitter he "hit the roof" upon hearing the news.

These questions of privacy and research implicate far more than the eye patients of London. The Mayo Clinic has a research partnership with IBM Watson, and Massachusetts General Hospital began working with the computer technology company Nvidia in April, with plans to use images and data to research algorithms to create "deep learning algorithms" for radiology and pathology.

In US law, the key factor in whether such research would be legal is how the research is published, says Jeffery Smith, vice president of public policy at the Maryland-based American Medical Informatics Association. Hospital staff may conduct an internal review of patient records for quality control, or even employ an outside company for analysis, so long as the data stays anonymous, but they would need a special exemption under a 1991 law to publish the research.

Updating the law became a focus for both the Obama administration and Congress in 2015, but balancing patient privacy with the vast research potential of digitized records remains a sticking point.

"The larger debate ... is definitely trending towards making sure patients are informed and can opt out if they want to," Mr. Smith tells The Monitor. "[The policy debate] will be with us for some time."