Machines that learn: The origin story of artificial intelligence

Loading...

Lee Sedol, a world champion in the Chinese strategy board game Go, faced a new kind of adversary at a 2016 match in Seoul.

Developers at DeepMind, an artificial intelligence startup acquired by Google, had fed 30 million Go moves into a deep neural network. Their creation, dubbed AlphaGo, then figured out which moves worked by playing millions of games against itself, learning at a faster rate than any human ever could.

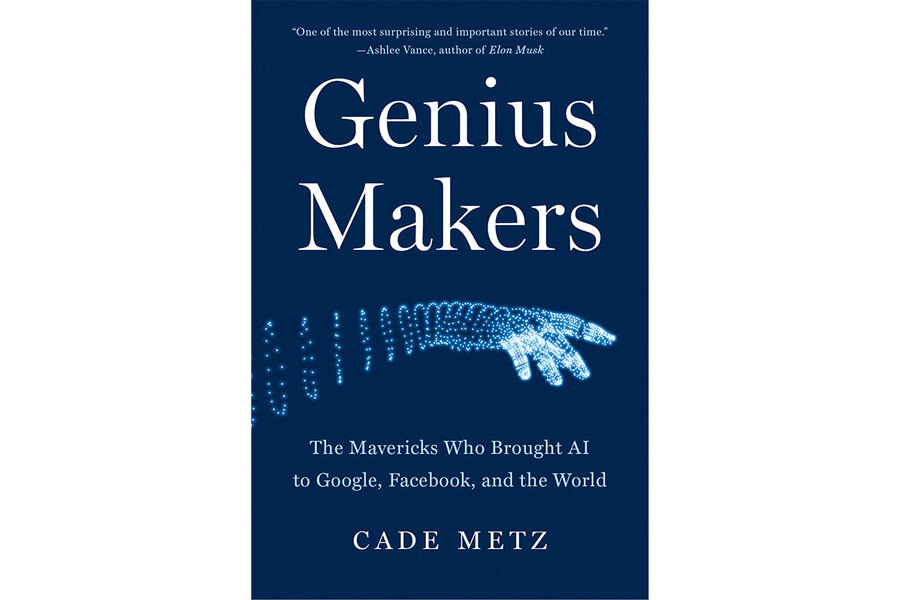

The match, which AlphaGo won 4 to 1, “was the moment when the new movement in artificial intelligence exploded into the public consciousness,” technology journalist Cade Metz writes in his engaging new book, “Genius Makers: The Mavericks Who Brought AI to Google, Facebook, and the World.”

Metz, who covers AI for The New York Times and previously wrote for Wired magazine, is well positioned to chart the decades-long effort to build artificially intelligent machines. His straightforward writing perfectly translates industry jargon for technologically un-savvy readers (like me) who might be unfamiliar with what it means for a machine to engage in “deep learning” or master tasks through its own experiences.

Metz chronicles the mad 21st-century gold rush of AI, in which American frontrunner Google has competed domestically (against rivals like Facebook and Microsoft) as well as internationally (against Chinese competitors like Baidu). Each company has spent billions on research, gobbled up startups, and attempted to lure a small pool of talent with the kind of money and urgency usually associated with top NFL prospects. China has announced plans to be the world leader in AI by 2030.

But it wasn’t always so frenetic. Metz shares the origins of AI in 1958, when a Cornell professor successfully taught a computer to learn. The machine was as wide as a kitchen refrigerator, and was fed cards marked with small squares on either the left or right sides. After reading about 50 of them, it began to correctly identify which cards were which – thanks to programming based on the human brain.

Overhyped expectations exceeded the technology of the era, and the study of so-called neural networks capable of replicating human intelligence remained largely fallow in subsequent decades. Even so, by 1991 the technology had advanced to a point that a machine could learn to identify connections on a family tree or drive a Chevy from Pittsburgh to Erie, Pennsylvania.

As interest in AI waxed and waned, early progress in the field came from just a handful of scientists. Metz focuses on Geoffrey Hinton, a British-born Canadian scientist who sold his startup to Google and subsequently won the Turing Award – the Nobel Prize of computing.

Metz’s description of Hinton and the many graduate students he trained at the University of Toronto is a window into the work of a genius. Another pioneer, Demis Hassabis, a co-founder of DeepMind, started off creating computer games before setting out to build “artificial general intelligence” capable of doing anything the human brain could do.

Artificial intelligence, which is still in its infancy, has already remade speech and image recognition and is helping Big Tech companies predict what words you’ll type in an email or what ads you’ll click on next.

The potential is immense. So are the risks, and Metz touches on some of the pitfalls that have already emerged.

For one thing, AI’s output is only as good as the information used to train it. Big Tech companies have, in many instances, relied disproportionately on photos of white men to train photo-recognition tools. That practice led to the awful moment in 2015 when Google Photos labeled pictures of Black people as “gorillas.”

And the same technology that helps self-driving cars identify pedestrians might also help make drone strikes more accurate. Google faced an internal revolt over plans to work with the U.S. Department of Defense.

Metz cites New York Times reporting that the Chinese government worked with AI companies to build facial-recognition technology that could help track and control its minority Uighur population.

For the most part, though, Metz focuses less on the ethics of AI – and its potentially troubling future applications – than he does on how researchers got to the present moment.

Perhaps there will come a day when artificially intelligent robots can read Metz’s book as a history of the baby steps that got them there: viewing millions of YouTube videos to learn how to recognize cats and mastering games like Go.

Seth Stern is an editor at Bloomberg Industry Group.