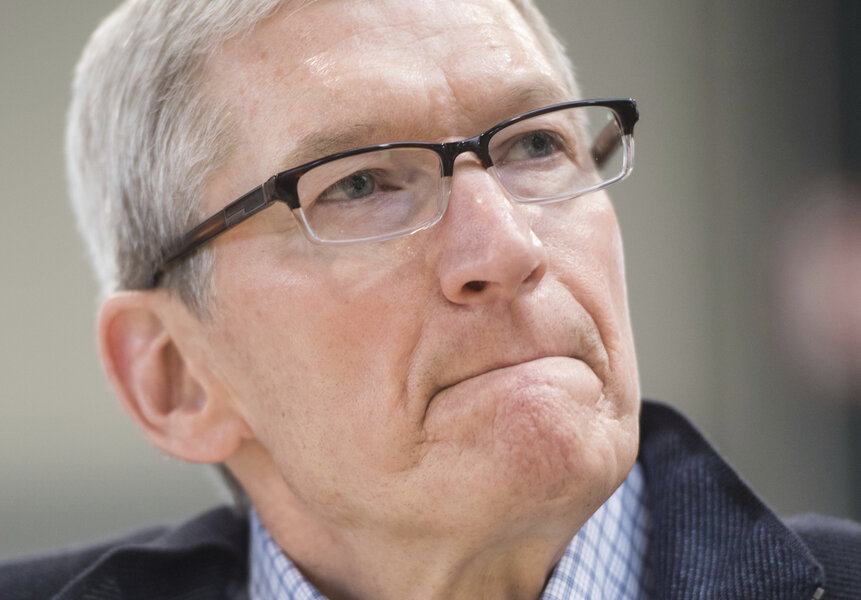

Apple's Tim Cook joins the fake news war

Loading...

Fake news. Even the feuding President Trump and CNN agree it’s a problem, although they might not see eye to eye on how to define it, let alone fix it.

Perceived media inaccuracy and media distrust are swirling into a perfect storm that threatens to undermine the public trust and sow seeds of doubt in the very notion of fact itself.

Apple's chief executive officer Tim Cook recently said that fake news is “killing people’s minds,” perhaps signaling Apple’s intention to enter the fray alongside the likes of Facebook and the governments of Ukraine and Germany. But can a lack of faith in online information be restored with a top-down approach?

If technology and government oversight represent two fronts in the war on fake news, education is rapidly opening up a third front. A push to teach people to separate fake from real, as well as opinion from fact, might sidestep thorny issues of censorship and institutional distrust.

Fake news became news itself in the aftermath of the US election. At times outperforming real news, fake headlines proved believable three times out of four. Despite one study finding such stories to be dozens of times less effective than a standard TV spot at changing opinions, many worried about their potential impact on the election, since the same study found that the average American saw and remembered 0.92 pro-Trump fake stories, but only 0.23 pro-Clinton ones.

While fake news skewed right during the election, it’s a bipartisan problem. Craig Silverman of Buzzfeed News, who has studied media inaccuracy for years, explained to NPR why we’re all susceptible:

We love to hear things that confirm what we think and what we feel and what we already believe. It's – it makes us feel good to get information that aligns with what we already believe or what we want to hear.

And on the other side of that is when we're confronted with information that contradicts what we think and what we feel, the reaction isn't to kind of sit back and consider it. The reaction is often to double down on our existing beliefs.

He then goes on to explain the role social media, especially Facebook, plays in amplifying those stories. Behind the scenes algorithms are always working to better understand what content we want to engage with, and Facebook delivers us its best guesses. The more we click on fake news stories that play on our emotions and partisanship, the more Facebook will show us.

That vicious cycle is why many first looked to technology companies for a solution. Facebook, for example, has already fired its opening salvo with a feature that lets community members report a story as fake for third party fact checking via websites like Snopes.

Some wonder if a Facebook-endorsed fake news label might become a "badge of honor" for political fringe sites, but at the very least it disqualifies those stories from receiving ad revenue, a common motivation for spammers who get paid per click.

In Tim Cook’s comments, he agreed that companies like Facebook and Apple have an important role to play, but he also highlighted a key challenge in implementing such features.

“All of us technology companies need to create some tools that help diminish the volume of fake news. We must try to squeeze this without stepping on freedom of speech and of the press, but we must also help the reader.”

Any group interested in stopping fake news quickly finds itself in the sticky position of declaring itself an arbiter of truth.

Free speech activists are already sounding the alarm that European governments may be leaning Orwellian with discussion of fines or even prison terms for spreading fake news in Italy and Germany. Even if regulators aim these tools only at fraudulent websites now, future lawmakers may have other ideas. Imagine if Trump could shut down CNN with an executive order.

In addition to the top-down approaches embraced by Facebook and European lawmakers, a new bottom-up educational strategy is gaining popularity, and was recently tested on the target of Russia’s first major misinformation campaign: Ukraine.

In response to an influx of Russian "news" surrounding the annexation of Crimea, the Ukrainian government started with outright censorship of Russian sources. Then last year, Canada partnered with nonprofit IREX to organize workshops that taught more than 15,000 Ukrainians how to spot fake news and do their own fact checking.

Preliminary results spark hope. An education start-up aimed at improving literacy through news has taken on a secondary goal of teaching kids to evaluate news’s trustworthiness by asking questions about where articles get their facts, or what their bias is.

Research suggests that these kinds of awareness-raising questions may be effective, finding that a disclaimer reminding readers an issue is controversial can help people to read more critically, and better resist misinformation.

Entrenched partisanship make it difficult to sort news organizations into black and white categories such as “fake” or “trustworthy,” but the notion of teaching people to make that distinction for themselves could be easier to swallow for those on both the left and the right.

Cook agrees that this third front is essential.

“It has to be ingrained in the schools, it has to be ingrained in the public. There has to be a massive campaign. We have to think through every demographic... It’s almost as if a new course is required for the modern kid, for the digital kid.”