A new step forward for robots

Loading...

For the past 30 years, scientists and technicians have grappled with making robots walk on two legs. Humans do it effortlessly, but the simple act has a lot of hidden complexity. And until recently, computers were very bad at it.

Now, several teams across the country are refining the first generation of robots that are close to walking like people. That includes the ability to recover from stumbles, resist shoves, and navigate rough terrain.

In walks PETMAN, designed by Boston Dynamics in Waltham, Mass. The two-legged robot saunters with uncanny realism. The android has no upper body, just steel and plastic legs attached to a system of power cables. But it walks on its own, using the same heel-to-toe motion that humans use. When pushed from the side, PETMAN sidesteps to recover its balance. The robot even wears shoes.

Why make a robot like this? Researchers say walking robots provide them with a benchmark to gauge engineering precision, a chance to improve the lives of older people, and the ability to create more useful machines capable of navigating a world built for humans.

In PETMAN’s case, the Army, which funds the project, needs a machine that can simulate realistic human motions to test dangerous equipment such as chemical protection gear. Boston Dynamics plans to deliver a version with a head and a torso by early 2011.

Marc Raibert, founder and president of Boston Dynamics, says the secrets of balance were almost a complete mystery back in 1980, when he and other robotics experts started the Leg Lab at Carnegie Mellon University in Pittsburgh. In 1986 he moved the Leg Lab to the Massachusetts Institute of Technology in Cambridge, Mass.

With “a lot of the first walking robots, [scientists] tried to make them like a table,” he says. Researchers thought that robots should be permanently stable. These early efforts in robotics attempted to program exactly where each foot should fall, calculate all the possibilities ahead of time – but human and other animals don’t work that way. Instead, we are actually in a kind of controlled fall, using our feet to sense how best to regain balance after each step.

So, the students at the Leg Lab tried different tacks. They created a succession of robots ranging from stiff-legged machines that bounced to droids that looked progressively more natural. Boston Dynamics’ first successes jogged on four legs, such as Big Dog, a military project designed to carry heavy loads across rough terrain. Big Dog can negotiate snow, forests, and rocky hills – terrain that might stymie wheeled vehicles.

Until recently, moving over anything but level ground was out of the question for most robots. Perhaps the most famous walking humanoid robot, ASIMO, was introduced as a prototype by Japanese car company Honda in the early 1990s. Even after years of revisions and learning to run, ASIMO can still be pushed over easily, struggles with uneven terrain, and moves with a bent-knee gait that doesn’t look much like normal walking.

Leg Lab alumni have since fanned out to several different universities and corporations. One of them, Jerry Pratt, is a research scientist at the Institute for Human and Machine Cognition in Pensacola, Fla.

Mr. Pratt says that humans can react to stumbles very quickly – faster than most machines. When you fall or lose your balance, it only takes about 0.43 seconds to respond. Current robots take as much as 0.6 seconds. That’s a lot of time when tumbling.

“It’s really a key requirement when you’re talking about push recovery,” he says. To Pratt, the impressive thing about PETMAN and Big Dog – which he did not work on – is the speed at which they can move their legs in several directions.

On top of that, human legs don’t really flex. They are actually more of a pendulum, swinging relatively freely until you put pressure on them.

At that point, instinct kicks in. Your feet can land anywhere in a wide area without fear of losing balance, because they shift to keep stable. Yet most robots still have feet more reminiscent of a tripod’s stumps than an animal’s paws. Pratt says the key was figuring out how to program the robots so that they readjust after their feet hit the ground.

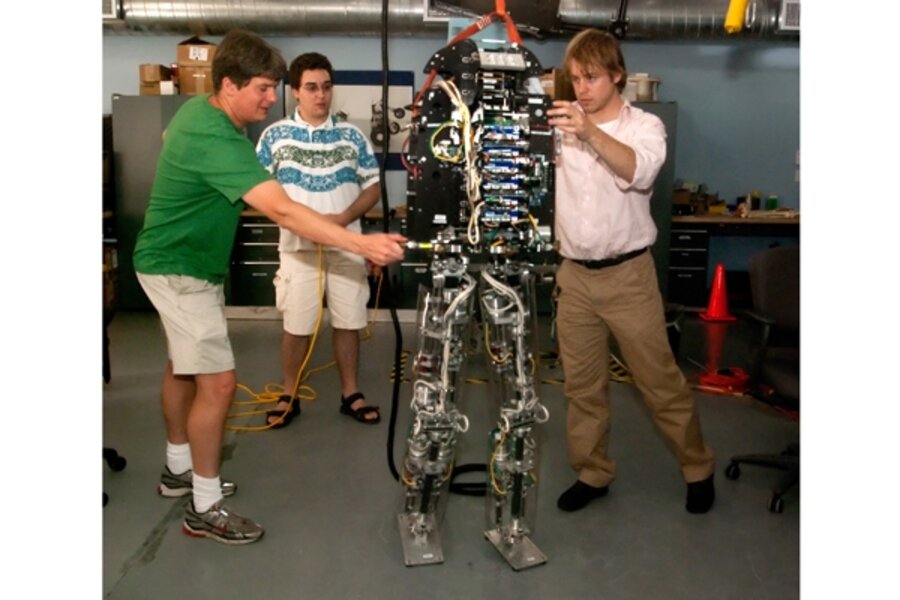

His robot, M2V2, was started at the Leg Lab. Since then, he has improved the design and can get the machine to stand on one foot, and even shift side to side when elbowed.

M2V2’s nimble feet came from Buckell University, where interim dean of engineering Keith Buffinton and his team designed a foot with pressure sensors. This new development gives M2V2 enough information to judge how to adjust balance. A flat-footed robot can’t do that.

All of this has uses beyond creating humanoid robots. Chris Atkeson, a professor at the Robotics Institute at Carnegie Mellon University, says his goal is to find out why older people tend to fall. If he could properly simulate that in a robot, he could test theories and develop new ways to help. “This is about understanding people,” he explains.

Similarly, in Japan, ASIMO serves a higher purpose than just entertaining during a press conference. Honda spokeswoman Alicia Jones notes that ASIMO’s development helped pave the way for devices designed to assist older people – a big concern in a country with a rapidly aging population.

Mr. Atkeson’s group employs motion-capture technology – similar to what Hollywood uses for realistic computer graphics – to figure out exactly how humans operate. He says there are two things that immediately distinguish humans from current-generation robots.

First is the ability to “damp” motion. If you slap a person’s palm, for instance, the hand will move a little, but only for a second before it goes back into position.

“Humans are well damped,” he says. “We have to find a way to absorb impact energy” in robots.

Second, to have any power, the motors end up moving very slowly because they are in low gear all the time. That’s a big reason it’s hard for them to move as fast as people react.

Another reason to make machines look humanoid is that, for robots to be useful, they have to move through a world designed for people. Houses aren’t built for wheels or creatures wider than they are tall.

Pratt adds that a bipedal robot could even replace humans in risky jobs such as space exploration – where having more legs makes machines a lot more effective on rough terrain.

He notes that when the National Aeronautics and Space Administration sent the Pathfinder probe to Mars, the rover was not able to descend into some craters because it may have never gotten out again.

A legged robot – with either two or four feet – would have had no trouble, Pratt says.